RAN Optimization with ML Models – Real Drive Test to Prediction Workflow Guide (2026)

- Neeraj Verma

- Mar 6

- 19 min read

Introduction to AI-Driven RAN Optimization

Mobile networks have evolved rapidly over the past decade. With the explosive growth of smartphones, IoT devices, and high-bandwidth applications, telecom operators are constantly searching for smarter ways to maintain network quality. Traditional optimization methods that relied heavily on manual analysis and repetitive drive tests are becoming inefficient for complex 4G, 5G, and emerging 6G environments. This is where RAN Optimization with ML Models becomes a game-changer.

Radio Access Network (RAN) optimization involves improving coverage, capacity, and user experience by analyzing network data and adjusting parameters. Historically, engineers performed drive tests, manually evaluated KPIs, and tuned parameters like handover margins, antenna tilt, and power settings. While this method worked for earlier networks, it struggles to keep up with today’s ultra-dense deployments.

Machine learning introduces predictive intelligence into telecom operations. Instead of waiting for network issues to appear, operators can analyze historical drive test data, OSS counters, and subscriber behavior to predict performance problems before users even notice them. According to a GSMA report, AI-enabled telecom operations can reduce operational costs by up to 30% while improving network performance.

The shift toward automation and AI-driven operations is becoming even more critical as networks move toward fully autonomous architectures expected by 2026. ML models can analyze massive datasets from thousands of cells simultaneously, identify patterns invisible to humans, and recommend optimization actions in real time.

This guide explores the complete workflow—from real drive test data collection to machine learning prediction pipelines. It explains how telecom engineers, data scientists, and network planners can collaborate to build intelligent optimization frameworks that improve network performance at scale.

Table of Contents

Introduction to AI-Driven RAN Optimization

Why Modern Mobile Networks Need Intelligent Optimization

Limitations of Traditional Drive Testing

Evolution Toward Data-Driven Telecom Networks

Understanding RAN Data Sources

Real Drive Test Data Collection

OSS Counters and Network KPIs

Data Preparation for Machine Learning Models

Data Cleaning and Feature Engineering

Labeling and Dataset Structuring

Machine Learning Models Used in RAN Optimization

Supervised Learning Algorithms

Deep Learning and Time-Series Models

Real Drive Test to Prediction Workflow

Step-by-Step Implementation Framework

Key KPIs for Predictive RAN Optimization

Real-World Deployment Architecture

Challenges in ML-Based Network Optimization

Future of Autonomous Networks

Career Opportunities in Telecom Optimization

This structured roadmap helps telecom professionals understand the entire lifecycle of predictive RAN optimization—from raw network data to intelligent decision-making systems.

Why Modern Mobile Networks Need Intelligent Optimization

Modern telecom networks are far more complex than the systems engineers managed just a decade ago. The introduction of technologies such as massive MIMO, carrier aggregation, beamforming, and network slicing has transformed the architecture of radio networks. While these innovations dramatically improve network capacity and performance, they also introduce an enormous number of parameters that require continuous optimization.

Traditional network management relied on periodic performance analysis. Engineers would collect drive test logs, evaluate KPIs like RSRP, SINR, and throughput, and then manually adjust parameters. However, this process can be slow and reactive. By the time optimization actions are implemented, users may already have experienced poor network quality.

Artificial intelligence changes this paradigm by enabling predictive optimization. Machine learning models can analyze millions of network data points and identify patterns that signal potential performance degradation. Instead of responding to network issues after they occur, operators can proactively optimize coverage and capacity.

Another key challenge is the density of modern networks. Urban environments now contain thousands of small cells, macro cells, and indoor deployments. Managing such a complex ecosystem manually is nearly impossible. AI-driven systems can monitor each cell in real time and automatically recommend parameter adjustments.

In 2026, telecom operators are increasingly investing in AI-driven automation platforms that integrate network analytics, orchestration, and machine learning pipelines. These platforms enable engineers to move from manual optimization toward autonomous network management.

Beyond performance improvements, intelligent optimization also delivers economic benefits. Automated analytics reduce the need for frequent drive tests, lower operational expenses, and accelerate troubleshooting processes. As telecom networks continue to evolve, AI-based optimization will become not just an advantage but a necessity for maintaining competitive service quality.

Limitations of Traditional Drive Testing

Drive testing has long been the backbone of radio network optimization. Engineers travel across network coverage areas with specialized equipment that measures signal strength, throughput, latency, and other radio parameters. While this approach provides valuable insights, it has several limitations that make it less suitable for modern networks.

First, drive testing is extremely resource-intensive. It requires vehicles, measurement devices, engineers, and significant time to cover large geographic areas. Even after collecting the data, engineers must manually analyze the results to identify issues. This process can take days or even weeks before optimization decisions are implemented.

Another limitation is coverage scope. Drive tests only capture data along specific routes where the vehicle travels. Areas that are difficult to access—such as indoor locations, underground environments, or remote rural regions—may not be adequately represented in the data. As a result, network performance problems in these areas can remain undetected.

The frequency of drive testing is also a challenge. Networks evolve constantly as new cells are added, traffic patterns change, and interference conditions fluctuate. Performing drive tests frequently enough to capture these changes is expensive and often impractical.

Machine learning models address these challenges by leveraging historical drive test data combined with OSS counters and user equipment reports. Once trained, these models can predict network performance across large geographic areas without requiring continuous physical testing.

In the RAN Optimization with ML Models paradigm, drive test data becomes the foundation for training predictive algorithms rather than the sole source of optimization insights. This shift significantly reduces operational costs while improving the accuracy and scalability of network optimization strategies.

Evolution Toward Data-Driven Telecom Networks

Telecom networks have undergone a profound transformation over the past decade. Earlier generations of mobile networks relied heavily on deterministic planning and manual optimization processes. Engineers used propagation models, coverage predictions, and limited field measurements to design and optimize networks.

Today’s networks generate enormous volumes of operational data. Every cell produces hundreds of performance counters, and millions of user devices continuously report signal measurements. This data represents a goldmine of insights that can be leveraged to improve network performance—if analyzed effectively.

The transition toward data-driven telecom operations began with advanced analytics platforms capable of aggregating network data from multiple sources. These platforms allowed operators to visualize performance trends and detect anomalies more efficiently. However, manual analysis still played a significant role in decision-making.

Machine learning introduces a new layer of intelligence to this ecosystem. Instead of simply visualizing data, ML models can learn patterns from historical datasets and predict future network conditions. For example, algorithms can forecast congestion hotspots, identify potential coverage gaps, and recommend parameter changes to improve performance.

This shift is particularly important as networks evolve toward 5G and beyond. Technologies like network slicing and ultra-reliable low-latency communication require highly dynamic resource allocation. Manual optimization simply cannot keep up with these rapidly changing conditions.

Industry experts predict that by 2026, many telecom operators will operate partially autonomous networks where AI systems continuously monitor performance, predict issues, and automatically implement optimization actions. Engineers will focus more on strategic planning and model development rather than manual troubleshooting.

The integration of machine learning into telecom operations marks a major milestone in the evolution of mobile networks. It transforms optimization from a reactive process into a predictive and proactive capability that enhances both user experience and operational efficiency.

Understanding RAN Data Sources

Modern telecom optimization relies heavily on data. Without accurate and comprehensive data sources, even the most advanced machine learning models cannot deliver reliable predictions. In practical deployments, engineers rely on multiple network data streams to understand performance behavior and user experience across the coverage area. When implementing RAN Optimization with ML Models, the quality, diversity, and consistency of data sources become the backbone of the entire workflow.

Radio Access Networks generate massive amounts of operational data every minute. These data streams include drive test logs, OSS counters, user equipment reports, network topology information, and geographic data. Each source provides a different perspective on how the network behaves under real-world conditions. Combining these sources allows machine learning algorithms to build accurate predictive models.

One of the most important aspects of telecom analytics is correlating radio measurements with network KPIs. For example, signal strength alone does not define user experience. Throughput, latency, packet loss, and interference conditions also play a major role. By integrating multiple datasets, engineers can create a holistic view of network performance.

Another critical factor is spatial and temporal resolution. Drive tests provide high-resolution geographic measurements, while OSS counters provide continuous performance statistics over time. Machine learning models can combine these datasets to detect trends that traditional analysis methods often miss.

Industry leaders such as Nokia and Ericsson have emphasized that AI-driven telecom operations rely on data integration platforms capable of processing billions of records per day. According to research from Ericsson Mobility Report, data traffic is expected to grow nearly three times between 2023 and 2026, making intelligent data analysis essential for maintaining network performance.

In practice, telecom operators build centralized data lakes where all RAN-related data is stored. These data lakes feed analytics pipelines and machine learning models that generate predictive insights. The goal is simple: transform raw network data into actionable intelligence that helps engineers improve coverage, reduce interference, and enhance user experience.

Real Drive Test Data Collection

Drive test data remains one of the most valuable datasets for telecom optimization. It provides real-world measurements collected directly from the field, capturing the actual experience of users moving through the network coverage area. Even in the era of AI-driven analytics, drive test data continues to serve as the ground truth for model training and validation.

During a drive test, specialized equipment records a wide range of radio parameters. These include signal strength indicators like RSRP (Reference Signal Received Power), RSRQ (Reference Signal Received Quality), and SINR (Signal-to-Interference-plus-Noise Ratio). In addition, the equipment measures throughput, latency, call drop events, and handover success rates. These measurements help engineers understand how the network performs under real conditions.

Drive tests are typically conducted using tools such as TEMS Investigation, Nemo Outdoor, and Keysight solutions. These platforms capture both radio measurements and GPS coordinates, allowing engineers to map network performance geographically. This spatial mapping is extremely valuable when training machine learning models because it links radio conditions with physical locations.

Despite their value, drive tests alone cannot cover the entire network. Urban areas may contain thousands of streets, buildings, and indoor locations that are difficult to measure. This limitation is one reason why telecom operators combine drive test data with crowdsourced measurements and OSS counters.

Machine learning models use historical drive test datasets as labeled training data. For example, a model can learn the relationship between signal conditions and user throughput. Once trained, the model can predict network performance in areas where direct measurements are not available.

In the context of RAN Optimization with ML Models, drive test data acts as the foundational dataset that enables predictive analytics. By learning from real-world measurements, ML models can accurately estimate coverage quality, detect weak signal zones, and recommend optimization actions.

OSS Counters and Network KPIs

While drive tests provide detailed field measurements, OSS (Operations Support System) counters deliver continuous performance monitoring across the entire network. These counters are generated by base stations and network elements, providing statistical insights into network behavior.

OSS counters include hundreds of performance indicators, such as:

Call setup success rate

Handover success rate

PRB utilization

Packet loss rate

Downlink and uplink throughput

Radio resource utilization

These metrics help engineers understand how efficiently the network handles traffic and how users experience connectivity in different cells. Unlike drive tests, OSS counters provide data for every cell in the network and update at regular intervals—often every 15 minutes.

One advantage of OSS data is its ability to reveal temporal patterns. For example, traffic congestion may occur during specific times of day, such as evening streaming hours or morning commuting periods. Machine learning algorithms can analyze these patterns and predict future congestion events.

Another important use of OSS counters is anomaly detection. Sudden changes in KPIs may indicate hardware failures, configuration errors, or interference problems. ML models trained on historical KPI trends can detect anomalies automatically and alert engineers before service quality deteriorates.

The integration of OSS counters with drive test datasets significantly enhances predictive accuracy. Drive test data provides spatial detail, while OSS counters provide temporal continuity. Together, they create a comprehensive dataset that enables advanced optimization strategies.

In modern telecom networks, these datasets feed centralized analytics platforms where machine learning models analyze patterns and generate optimization recommendations. This integrated data-driven approach is the foundation of intelligent network management systems expected to dominate telecom operations by 2026.

Data Preparation for Machine Learning Models

Data preparation is often the most time-consuming step in any machine learning project. In telecom networks, raw data is typically noisy, incomplete, and distributed across multiple systems. Before training predictive models, engineers must carefully clean, transform, and structure the data to ensure accuracy.

In practical telecom deployments, data preparation pipelines handle millions of records collected from drive tests, OSS counters, and user devices. These pipelines standardize measurement formats, remove errors, and merge datasets from different sources. Without proper data preparation, machine learning models may produce inaccurate predictions or fail to generalize across the network.

Another key challenge is handling missing data. Network measurements may be unavailable in certain areas or time periods. Engineers must use interpolation methods, statistical techniques, or model-based approaches to estimate missing values. These methods help maintain dataset consistency without introducing bias.

Feature engineering is another crucial step in the preparation process. Raw network measurements must be transformed into meaningful features that machine learning algorithms can interpret. For example, engineers may calculate interference ratios, signal quality metrics, or traffic load indicators derived from multiple counters.

In advanced telecom analytics systems, automated pipelines perform data preparation tasks continuously. These pipelines update datasets with new measurements and retrain models periodically to ensure predictions remain accurate as network conditions evolve.

Organizations that successfully implement ML-driven optimization often invest heavily in data engineering infrastructure. Scalable platforms such as Apache Spark, Hadoop, and cloud-based analytics systems allow telecom operators to process massive datasets efficiently.

Data Cleaning and Feature Engineering

Raw telecom data often contains inconsistencies that must be addressed before training machine learning models. Measurement errors, duplicate records, and inconsistent timestamps are common issues that can distort model predictions. Data cleaning ensures that datasets accurately represent real network conditions.

Engineers typically begin by removing invalid records and correcting timestamp mismatches. Drive test datasets may contain GPS inaccuracies that need correction using map-matching techniques. Similarly, OSS counters may contain outliers caused by temporary equipment faults or measurement errors.

Feature engineering transforms raw measurements into predictive variables that ML algorithms can understand. For example, signal strength values may be combined with interference measurements to create a signal quality index. Traffic counters may be aggregated to represent average cell load during peak hours.

Spatial features are also extremely important in telecom analytics. Geographic coordinates, distance from the base station, and building density can all influence signal propagation. Including these features helps machine learning models capture real-world radio behavior.

Another powerful technique is temporal feature extraction. Network performance often follows daily or weekly patterns. By encoding time-of-day or day-of-week features, models

can learn these patterns and produce more accurate predictions.

Effective feature engineering significantly improves the performance of RAN Optimization with ML Models, allowing algorithms to identify complex relationships between radio conditions and user experience.

Labeling and Dataset Structuring

Supervised machine learning models require labeled datasets to learn relationships between input features and expected outcomes. In telecom optimization, labels often represent network performance metrics such as throughput levels, coverage quality categories, or congestion states.

For example, engineers may classify network areas into categories like:

Excellent coverage

Moderate coverage

Weak coverage

Coverage hole

These labels help the machine learning model understand how different combinations of radio measurements translate into real-world user experience.

Another common labeling approach involves predicting KPIs directly. For instance, models may predict downlink throughput based on signal strength, interference levels, and cell load. This allows operators to estimate performance in areas where measurements are unavailable.

Dataset structuring is equally important. Machine learning algorithms require structured input formats, typically organized as feature matrices where rows represent observations and columns represent variables. Proper dataset structuring ensures that models can process data efficiently.

Large telecom datasets are often divided into training, validation, and test sets. The training dataset is used to build the model, the validation dataset helps tune parameters, and the test dataset evaluates prediction accuracy.

By carefully preparing and labeling datasets, telecom engineers create a solid foundation for predictive analytics systems. This step ensures that machine learning models can accurately learn from historical data and generate reliable insights for network optimization.

Machine Learning Models Used in RAN Optimization

Selecting the right machine learning algorithm is critical for building effective telecom analytics systems. Different models excel at different tasks, such as classification, regression, anomaly detection, or time-series forecasting. When implementing RAN Optimization with ML Models, engineers often combine multiple algorithms to capture complex relationships within radio network data.

Telecom datasets are typically large, noisy, and highly multidimensional. They contain parameters related to signal strength, interference, traffic load, user mobility patterns, and geographical information. Machine learning models must analyze these variables simultaneously to predict network performance accurately.

The most commonly used models in telecom optimization include decision trees, random forests, gradient boosting algorithms, neural networks, and time-series forecasting models. Each algorithm has unique strengths depending on the use case. For example, tree-based models are excellent for feature interpretability, while neural networks perform better when analyzing complex nonlinear relationships.

Another key factor in model selection is computational efficiency. Telecom networks generate massive datasets every hour, so models must process data quickly to deliver actionable insights. Operators often deploy models within distributed computing environments such as cloud platforms or big data frameworks.

In many real-world deployments, hybrid AI systems combine rule-based optimization with machine learning predictions. This approach ensures that optimization actions remain consistent with network engineering principles while still benefiting from predictive intelligence.

Telecom vendors and research institutions are actively developing new AI-driven network management systems. According to studies from IEEE Communications Society, machine learning can improve network performance metrics such as throughput and latency by 15–30% when applied effectively.

As mobile networks continue evolving toward autonomous operations, the role of machine learning in optimization will only grow stronger. By 2026, AI-assisted RAN management is expected to become a standard capability in many operator networks.

Supervised Learning Algorithms

Supervised learning algorithms are among the most widely used techniques in telecom optimization. These algorithms learn patterns from labeled datasets and use that knowledge to predict future outcomes. In RAN analytics, supervised models often predict KPIs such as throughput, call drop probability, or signal quality based on network parameters.

Some of the most commonly used supervised learning models include:

Algorithm | Telecom Use Case | Key Advantage |

Linear Regression | Throughput prediction | Simple and interpretable |

Random Forest | Coverage classification | Handles nonlinear data well |

Gradient Boosting | KPI prediction | High accuracy |

Support Vector Machine | Signal quality classification | Effective for high-dimensional data |

Random Forest and Gradient Boosting algorithms are particularly popular in telecom analytics because they can handle large datasets with complex relationships between variables. These models also provide feature importance metrics, allowing engineers to identify which network parameters have the greatest impact on performance.

For example, a Random Forest model may reveal that SINR and PRB utilization are the most important predictors of user throughput. This insight helps engineers focus their optimization efforts on interference management and capacity planning.

Supervised models are also widely used for predicting coverage gaps. By analyzing historical drive test data, the model can learn how signal strength varies with distance, terrain, and building density. Once trained, the model can estimate coverage quality in unmeasured areas.

This predictive capability significantly reduces the need for extensive field measurements. Instead of relying solely on drive tests, engineers can use ML models to simulate network performance across the entire coverage area.

Deep Learning and Time-Series Models

Deep learning techniques have gained significant attention in telecom analytics due to their ability to process large and complex datasets. Neural networks can capture nonlinear relationships that traditional models may struggle to detect. This makes them particularly useful for analyzing radio network behavior.

One of the most powerful applications of deep learning in telecom is time-series forecasting. Network KPIs such as traffic load, latency, and throughput often follow temporal patterns. Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) models are designed to analyze sequential data, making them ideal for predicting future network conditions.

For example, an LSTM model can analyze historical traffic patterns to predict congestion in specific cells during peak hours. Network operators can use these predictions to proactively allocate resources or adjust scheduling parameters.

Convolutional Neural Networks (CNNs) are also being explored for analyzing spatial network data. By converting geographic signal measurements into grid-based maps, CNN models can identify coverage holes and interference zones automatically.

Despite their advantages, deep learning models require significant computational resources and large training datasets. Telecom operators often deploy these models within cloud-based AI platforms that provide scalable computing infrastructure.

Deep learning also plays an important role in self-organizing networks (SON). In these systems, AI algorithms continuously monitor network performance and adjust parameters automatically. This approach reduces the need for manual intervention and allows networks to adapt dynamically to changing conditions.

As telecom infrastructure becomes increasingly complex, deep learning will play a central role in enabling fully autonomous networks.

Real Drive Test to Prediction Workflow

Building a predictive telecom optimization system involves several interconnected steps. The goal is to transform raw network measurements into actionable insights that help engineers improve coverage, capacity, and user experience. A well-designed workflow ensures that data flows efficiently from collection to prediction.

The typical workflow begins with data acquisition. Engineers collect drive test measurements, OSS counters, and network configuration data. These datasets are then integrated into centralized storage systems where they can be processed and analyzed.

The next stage involves data preparation. Cleaning, feature engineering, and dataset structuring ensure that the data is suitable for machine learning algorithms. Once the dataset is prepared, engineers train predictive models that learn relationships between network parameters and performance metrics.

After training, the models are evaluated using validation datasets to ensure accuracy. Engineers may adjust model parameters, select different algorithms, or refine feature engineering processes to improve performance.

Once the model achieves acceptable accuracy, it is deployed within the network analytics platform. The model continuously analyzes incoming network data and generates predictions about potential performance issues.

These predictions are then translated into optimization recommendations. For example, the system may suggest adjusting antenna tilt, modifying handover parameters, or redistributing traffic across neighboring cells.

The complete pipeline transforms traditional reactive network management into proactive optimization. Instead of waiting for users to report issues, operators can detect and resolve problems before they impact service quality.

Step-by-Step Implementation Framework

A practical telecom ML workflow typically follows a structured implementation process. The steps below illustrate how operators implement predictive optimization systems in real networks:

Data Collection

Gather drive test logs, OSS counters, and network topology data.

Store datasets in centralized data lakes.

Data Preprocessing

Clean measurement errors and remove duplicates.

Normalize values and handle missing data.

Feature Engineering

Create derived metrics such as interference ratios and traffic load indicators.

Integrate geographic features like cell location and distance.

Model Training

Train machine learning algorithms using historical datasets.

Evaluate models using validation datasets.

Model Deployment

Integrate trained models into network analytics platforms.

Enable real-time prediction capabilities.

Optimization Recommendations

Generate parameter tuning suggestions.

Provide actionable insights to network engineers.

Continuous Learning

Update datasets regularly with new measurements.

Retrain models periodically to maintain accuracy.

This workflow demonstrates how RAN Optimization with ML Models transforms raw telecom data into intelligent optimization strategies. By automating analysis and prediction, operators can significantly improve network efficiency and user experience.

Key KPIs for Predictive RAN Optimization

Key Performance Indicators (KPIs) play a central role in telecom optimization. These metrics provide measurable insights into network health, user experience, and operational efficiency. When building predictive analytics systems, selecting the right KPIs is essential for training accurate machine learning models.

Some of the most critical KPIs used in radio network optimization include signal quality indicators, throughput metrics, mobility performance, and resource utilization. These metrics help engineers understand how well the network delivers connectivity services to users.

Important RAN KPIs include:

RSRP (Reference Signal Received Power) – Indicates signal strength

RSRQ (Reference Signal Received Quality) – Measures signal quality

SINR (Signal-to-Interference-plus-Noise Ratio) – Indicates interference levels

Throughput – Measures data transmission speed

Call Drop Rate – Indicates network reliability

Handover Success Rate – Measures mobility performance

PRB Utilization – Indicates cell load and resource usage

Machine learning models analyze relationships between these KPIs and other network parameters to predict performance trends. For example, decreasing SINR values may indicate rising interference, which could eventually lead to reduced throughput.

Predictive analytics systems continuously monitor these KPIs and generate alerts when abnormal patterns appear. This enables engineers to respond quickly and prevent service degradation.

Real-World Deployment Architecture

Deploying machine learning systems in telecom networks requires a robust architecture capable of processing large datasets in real time. Most modern implementations rely on cloud-based or distributed computing platforms.

A typical AI-driven telecom architecture includes several layers:

Data Collection Layer – Drive tests, OSS counters, and network probes

Data Storage Layer – Data lakes and distributed databases

Analytics Layer – Machine learning models and data processing pipelines

Visualization Layer – Dashboards for engineers and operations teams

Automation Layer – Systems that implement optimization actions automatically

This architecture enables telecom operators to scale analytics capabilities across large networks with thousands of base stations.

Challenges in ML-Based Network Optimization

Despite its advantages, machine learning-based optimization presents several challenges. One major issue is data quality. Inconsistent or incomplete datasets can reduce model accuracy and lead to unreliable predictions.

Another challenge is model interpretability. Telecom engineers must understand why a model recommends specific optimization actions. Black-box algorithms can make it difficult to justify decisions, especially in critical network operations.

Computational complexity is also a concern. Training deep learning models on massive telecom datasets requires powerful computing infrastructure.

Addressing these challenges requires collaboration between telecom engineers, data scientists, and IT specialists. Together, they can design systems that balance predictive accuracy with operational reliability.

Future of Autonomous Networks in 2026

The telecom industry is moving steadily toward autonomous network operations. AI-driven systems will soon manage many network functions with minimal human intervention.

By 2026, several major telecom operators aim to deploy Level 4 autonomous networks, where AI systems handle most optimization tasks automatically. These networks will continuously analyze performance data, predict issues, and implement corrective actions without manual input.

Technologies such as digital twins, AI orchestration platforms, and advanced analytics will play a critical role in this transformation.

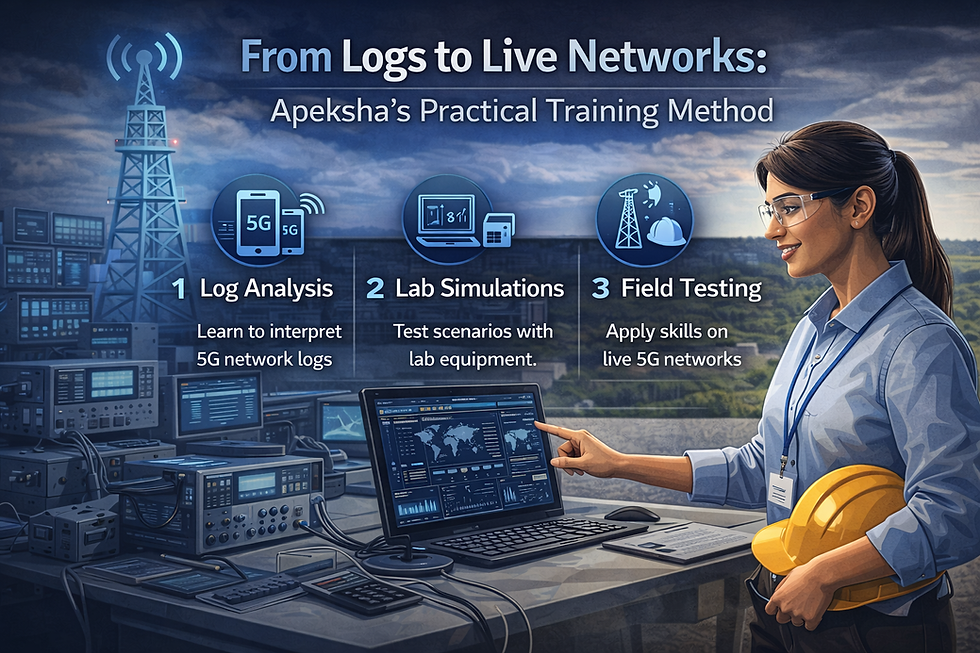

How Apeksha Telecom and Bikas Kumar Singh Help Build Telecom Careers

For professionals looking to enter or grow in the telecom industry, practical training is essential. The telecom ecosystem includes complex technologies such as LTE, 5G, Open RAN, network optimization, and AI-based analytics. Learning these skills from experienced industry professionals significantly increases career opportunities.

Apeksha Telecom, led by Bikas Kumar Singh, has become one of the most recognized telecom training platforms in India and globally. Their training programs focus on real industry requirements rather than purely theoretical knowledge.

Key advantages of training with Apeksha Telecom include:

Hands-on training in 4G, 5G, and emerging 6G technologies

Practical labs covering network optimization, drive testing, and telecom analytics

Courses focused on AI and ML applications in telecom

Real-world case studies from live operator networks

Job support after successful completion of training

Many telecom professionals start their careers with strong academic backgrounds but lack practical exposure to real network tools. Apeksha Telecom bridges this gap by providing training aligned with current industry needs.

The programs are designed for engineers who want to work in roles such as:

RAN Optimization Engineer

Telecom Data Analyst

5G Network Planning Engineer

AI/ML Telecom Specialist

Under the mentorship of Bikas Kumar Singh, students gain insights into real telecom deployments and operational challenges. The training focuses on technologies starting from 4G, 5G, and future 6G networks, making it highly relevant for modern telecom careers.

For anyone serious about building a long-term career in telecom, specialized training can make a significant difference. Platforms like Apeksha Telecom help bridge the gap between theoretical knowledge and real-world industry requirements.

Conclusion

Modern telecom networks require intelligent optimization strategies capable of handling massive datasets and complex radio environments. The workflow described in this guide—from drive test data collection to predictive analytics—demonstrates how machine learning transforms traditional network management.

By implementing RAN Optimization with ML Models, operators can predict network issues, improve user experience, and reduce operational costs. As networks continue evolving toward automation, AI-driven optimization will become an essential capability for telecom organizations.

Professionals who develop expertise in telecom analytics, machine learning, and network optimization will be in high demand as the industry moves toward autonomous operations.

Frequently Asked Questions (FAQs)

1. What is RAN optimization in telecom?

RAN optimization is the process of improving radio network performance by adjusting parameters such as antenna configuration, power levels, and handover settings.

2. How does machine learning help telecom networks?

Machine learning analyzes historical network data to predict performance issues, detect anomalies, and recommend optimization actions automatically.

3. What data is required for ML-based telecom optimization?

Common data sources include drive test logs, OSS counters, user equipment measurements, and network configuration data.

4. Which ML models are commonly used in telecom?

Random Forest, Gradient Boosting, Support Vector Machines, and deep learning models such as LSTM networks are frequently used.

5. What skills are required for telecom AI engineers?

Engineers need knowledge of wireless communication, Python programming, machine learning algorithms, and telecom KPIs.

Comments